GTrans: Grouping and Fusing Transformer Layers for Neural Machine Translation

Abstract

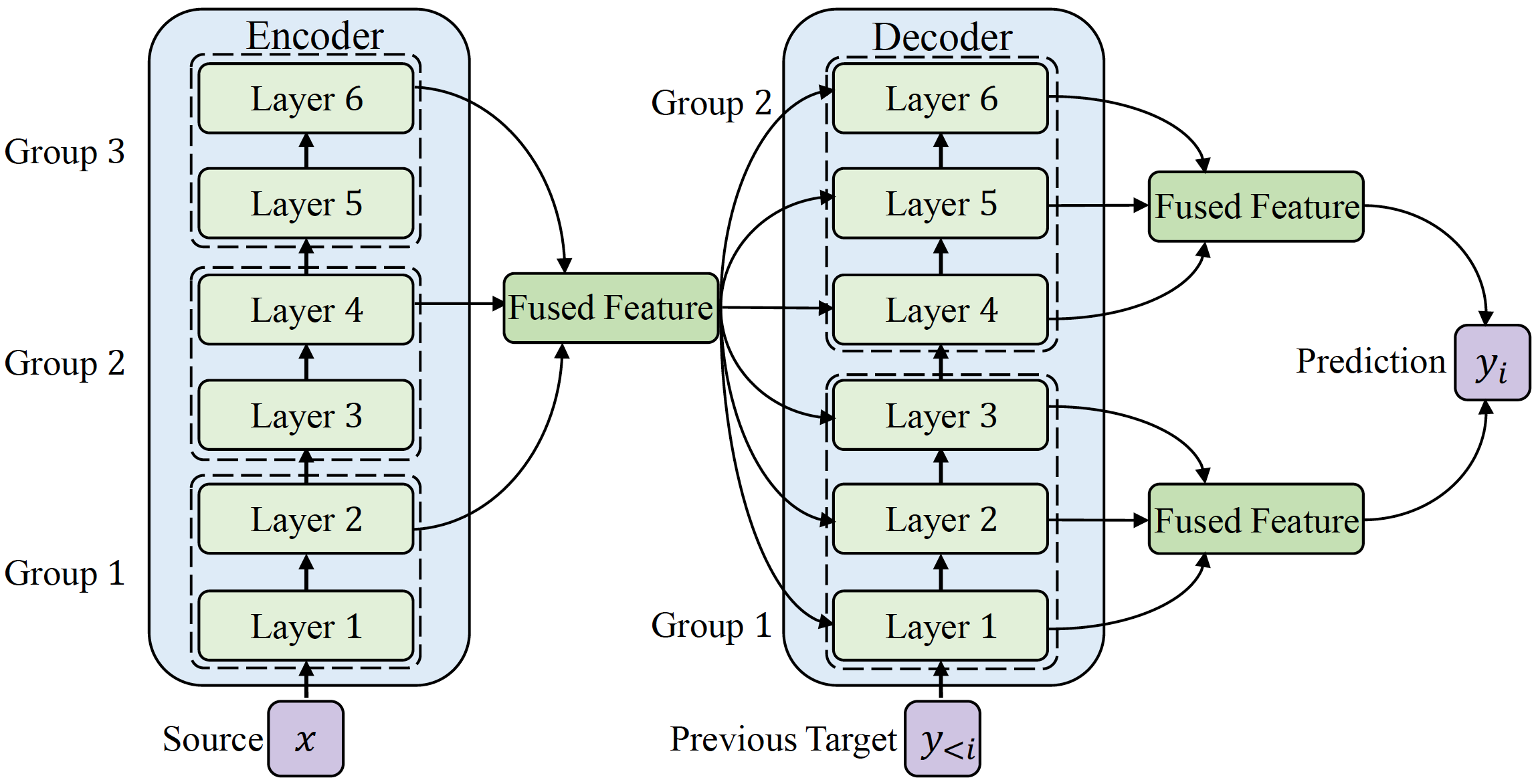

Transformer structure, stacked by a sequence of encoder and decoder network layers, achieves significant development in neural machine translation. However, vanilla Transformer mainly exploits the top-layer representation, assuming the lower layers provide trivial or redundant information and thus ignoring the bottom-layer feature that is potentially valuable. In this work, we propose the Group-Transformer model (GTrans) that flexibly divides multi-layer representations of both encoder and decoder into different groups and then fuses these group features to generate target words. To corroborate the effectiveness of the proposed method, extensive experiments and analytic experiments are conducted on three bilingual translation benchmarks and two multilingual translation tasks, including the IWLST-14, IWLST-17, LDC, WMT-14 and OPUS-100 benchmark. Experimental and analytical results demonstrate that our model outperforms its Transformer counterparts by a consistent gain. Furthermore, it can be successfully scaled up to 60 encoder layers and 36 decoder layers.

@article{gtrans,

title = {GTrans: Grouping and Fusing Transformer Layers for Neural Machine Translation},

author = {Yang, Jian and Yin, Yuwei and Yang, Liqun and Ma, Shuming and Huang, Haoyang and Zhang, Dongdong and Wei, Furu and Li, Zhoujun},

journal = {IEEE/ACM Transactions on Audio, Speech, and Language Processing},

year = {2022},

pages = {1-10},

doi = {10.1109/TASLP.2022.3221040},

url = {https://ieeexplore.ieee.org/document/9944969},

}